什么是有向距离场以及它能用来干什么

有向距离场记录的是从一个点到集合边界的的距离值,其值的正负对应该点在集合外部或内部。有向距离场有很广的应用范围可以用来简单的生成Voronoi图形,可以用来做全局光照的计算,可以用来做两个形状的平滑的变形,可以用来做高清晰度的字体,也可以用来做Ray March(虽然我认为不如直接光线追踪求交来的效率高)。像原神就使用了SDF的方法,生成了角色脸部的阴影图,从而让角色脸部的阴影能自然的变化。

那么什么又是Jump Flooding Algorithm呢

Jump Flooding Algorithm是荣国栋在他的博士论文Jump Flooding Algorithm On Graphics Hardware And Its Applications提出的一种在GPU上运行的能够快速传播某个像素的信息到其他像素的算法。

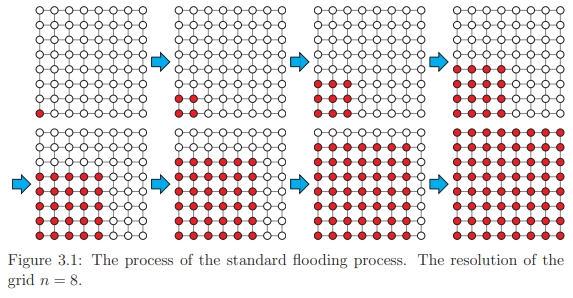

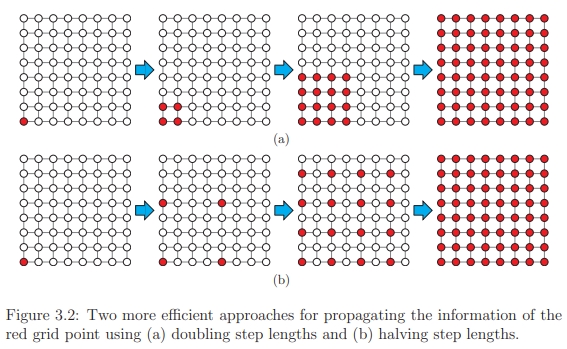

普通的Flooding算法在一次运行中,固定向相邻的一个像素的像素传播信息,而Jump Flooding则是按照2的幂次递增或是递减来传播信息。这和之前提到的并行计算——Reduction的想法差不多。下图演示了普通的Flooding和Jump Flooding的过程:

使用JFA计算一张2D图片对应的SDF贴图

首先在Unity中创建JumpFlooding.cs, JFAComputeShader.compute, 和JFAVisualize.shader,分别用来执行Compute Shader,使用JFA算法计算SDF和可视化JFA算法的结果。

这里使用一张RGB通道为灰色,Alpha通道写着“JFA”的贴图作为我们2D图片的输入。

整体思路和需要注意的事项

- 先从简单实现功能上来考虑,暂时忽略掉抗锯齿的需求,直接对Alpha通道的值按照0.5来划分出图形的内部和外部,大于等于0.5为外部,小于0.5为内部。

- SDF需要要计算距离,这里使用像素点中心到另一个像素点中心的距离(也可以使用像素点左下角到另一个像素点左下角的距离,不过为了明确起见,还是加上这半个像素的偏移比较好)。距离可以用uv的大小来表示,也可以用像素数量来表示,针对图像长宽不同的情况,这里以一个像素宽度为1来表示两个像素点的距离。在最后采样JFA的Render Texture的时候,也要注意使用sampler_PointClamp来进行采样,计算距离时也不能仅仅使用uv来计算,而是要使用像素中间的点的位置来进行计算。

- 普通的JFA算法会使用到Render Texture的两个通道,来标记像素对应的最近边界像素的UV,由于记录的是UV的数值而不是颜色信息,Render Texture要储存在线性空间中。由于要同时计算内部和外部的点到边界的有向距离,JFA算法会使用到Render Texture全部的四个通道,这里使用前两位记录位于内部的像素对应的边界像素的坐标,用后两位记录位于外部的像素对应的边界像素的坐标,即对于内部的像素(nearestUV.x, nearestUV.y, Z, W),对于外部的像素(X, Y, nearestUV.x, nearestUV.y),XYZW则可以用来表示该点为最初始的内外部的点、包含JFA传递的信息的点。不包含JFA传递的信息的点。

- 在JFA计算之前,需要先让贴图对应的点包含JFA信息,也就是说,对于内部的点初始化为(UV.x, UV.y, -1, -1),对于外部的点初始化为(-1, -1, UV.x, UV.y)。这要求我们使用的Render Texture格式为至少R16G16B16A16 SFloat。

- 在JFA的计算中,以外部的像素点为例,所进行的操作是:采样上次经过JFA操作的Render Texture;根据XY通道判断该点在边界的内部还是外部,内部就跳过;对外部的像素点,通过XY通道判断是否已包含JFA的信息,如果包含,根据ZW通道计算出当前点到其包含的最近像素的距离,如果不包含,将这个距离设置为一个极大的常数;分别采样距离像素点2的幂次的距离的八个像素,判断这些像素是否已包含JFA的信息,如果不包含,采样下一个点,如果包含,根据这些像素的ZW通道计算出当前点到这些像素包含的最近像素的距离,并和上一步算出的距离进行比较,如果小于上一步算出的距离,则证明该像素对应的最近像素为周围点包含的像素,更新该像素的ZW通道,并且将XY通道标记成已包含JFA的信息。

- 采样周边像素的步长从2D贴图的长宽的一半向上取整开始,每次JFA都取上一步步长的一半向上取整作为新的步长,一直进行到步长为(1, 1),进行最后一次JFA计算。

- 经过JFA计算之后,还需要将分别表示内部的点对应的最近像素的UV和外部的点对应的最近像素的UV结合起来,储存为一张贴图,可以是(nearestUV.x, nearestUV.y, 0, inside?1:0),也可以是(distance * inside?-1:1, 1)。

- 应该是跟当前平台有关,有时候会出现贴图上下颠倒的情况,可以用UNITY_UV_STARTS_AT_TOP来协助解决,不过compute shader可能需要自己启用这个宏,这里就直接硬写在shader里,不做平台判断了。

JFAComputeShader.compute

根据上面的整体思路,我们需要三个kernel,一个用来初始化,一个做JFA计算,最后一个用来合成最后的贴图。Compute Shader的关键字需要Unity 2020以上(我也不知道具体哪个版本)才能有,这里就暂时用UNITY_2020_2_OR_NEWER这个宏来屏蔽了。

#pragma kernel CopyUVMain

#define PIXEL_OFFSET 0.5

Texture2D<float4> _InputTexture;

RWTexture2D<float4> _OutputTexture;

float4 _TextureSize;

float _Channel;

[numthreads(8, 8, 1)]

void CopyUVMain(uint3 id : SV_DispatchThreadID)

{

float2 samplePosition = id.xy + PIXEL_OFFSET;

if (any(samplePosition >= _TextureSize.xy))

return;

float4 inputTexture = _InputTexture.Load(float3(id.xy, 0));

float determin = 0;

switch ((uint)_Channel)

{

case 0:

determin = inputTexture.r;

break;

case 1:

determin = inputTexture.g;

break;

case 2:

determin = inputTexture.b;

break;

case 3:

determin = inputTexture.a;

break;

default:

break;

}

if (determin >= 0.5)

{

_OutputTexture[id.xy] = float4(-1, -1, samplePosition.x * _TextureSize.z, samplePosition.y * _TextureSize.w);

}

else

{

_OutputTexture[id.xy] = float4(samplePosition.x * _TextureSize.z, samplePosition.y * _TextureSize.w, -1, -1);

}

}

#pragma kernel JFAMain

float2 _Step;

static int2 directions[] =

{

int2(-1, -1),

int2(-1, 0),

int2(-1, 1),

int2(0, -1),

int2(0, 1),

int2(1, -1),

int2(1, 0),

int2(1, 1)

};

float4 JFAOutside(float4 inputTex, float2 idxy)

{

float4 outputTex = inputTex;

//cull inside

if (inputTex.x != -1)

{

float2 nearestUV = inputTex.zw;

float minDistance = 1e16;

//if had min distance in previous flooding

if (inputTex.z != -1)

{

minDistance = length(idxy + PIXEL_OFFSET - nearestUV * _TextureSize.xy);

}

bool hasMin = false;

for (uint i = 0; i < 8; i++)

{

uint2 sampleOffset = idxy + directions[i] * _Step;

sampleOffset = clamp(sampleOffset, 0, _TextureSize.xy - 1);

float4 offsetTexture = _InputTexture.Load(float3(sampleOffset, 0));

//if had min distance in previous flooding

if (offsetTexture.z != -1)

{

float2 tempUV = offsetTexture.zw;

float tempDistance = length(idxy + PIXEL_OFFSET - tempUV * _TextureSize.xy);

if (tempDistance < minDistance)

{

hasMin = true;

minDistance = tempDistance;

nearestUV = tempUV;

}

}

}

if (hasMin)

{

outputTex = float4(inputTex.xy, nearestUV);

}

}

return outputTex;

}

float4 JFAInside(float4 inputTex, float2 idxy)

{

float4 outputTex = inputTex;

//cull outside

if (inputTex.z != -1)

{

float2 nearestUV = inputTex.xy;

float minDistance = 1e16;

//if had min distance in previous flooding

if (inputTex.x != -1)

{

minDistance = length(idxy + PIXEL_OFFSET - nearestUV * _TextureSize.xy);

}

bool hasMin = false;

for (uint i = 0; i < 8; i++)

{

uint2 sampleOffset = idxy + directions[i] * _Step;

sampleOffset = clamp(sampleOffset, 0, _TextureSize.xy - 1);

float4 offsetTexture = _InputTexture.Load(float3(sampleOffset, 0));

//if had min distance in previous flooding

if (offsetTexture.x != -1)

{

float2 tempUV = offsetTexture.xy;

float tempDistance = length(idxy + PIXEL_OFFSET - tempUV * _TextureSize.xy);

if (tempDistance < minDistance)

{

hasMin = true;

minDistance = tempDistance;

nearestUV = tempUV;

}

}

}

if (hasMin)

{

outputTex = float4(nearestUV, inputTex.zw);

}

}

return outputTex;

}

[numthreads(8,8,1)]

void JFAMain(uint3 id : SV_DispatchThreadID)

{

float2 samplePosition = id.xy + PIXEL_OFFSET;

if (any(samplePosition >= _TextureSize.xy))

return;

float4 inputTexture = _InputTexture.Load(float3(id.xy, 0));

float4 outSide = JFAOutside(inputTexture, id.xy);

_OutputTexture[id.xy] = JFAInside(outSide, id.xy);

}

#pragma kernel ComposeMain

Texture2D<float4> _OriginalTexture;

#if UNITY_2020_2_OR_NEWER

#pragma multi_compile _USE_GRAYSCALE

#else

#define _USE_GRAYSCALE 0

#endif

[numthreads(8, 8, 1)]

void ComposeMain(uint3 id : SV_DispatchThreadID)

{

uint2 reverseY = id.xy;

reverseY = uint2(id.x, _TextureSize.y - 1 - id.y);

float4 inputTexture = _InputTexture.Load(float3(reverseY, 0));

float4 originalTexture = _OriginalTexture.Load(float3(reverseY, 0));

float determin = 0;

switch ((uint)_Channel)

{

case 0:

determin = originalTexture.r;

break;

case 1:

determin = originalTexture.g;

break;

case 2:

determin = originalTexture.b;

break;

case 3:

determin = originalTexture.a;

break;

default:

break;

}

#if _USE_GRAYSCALE

float distance = 0;

if (determin >= 0.5)

{

distance = -length(reverseY + PIXEL_OFFSET - inputTexture.xy * _TextureSize.xy);

_OutputTexture[id.xy] = float4(distance, distance, distance, 1);

}

else

{

distance = length(reverseY + PIXEL_OFFSET - inputTexture.zw * _TextureSize.xy);

_OutputTexture[id.xy] = float4(distance, distance, distance, 0);

}

#else

if (determin >= 0.5)

{

_OutputTexture[id.xy] = float4(inputTexture.xy, 0, 1);

}

else

{

_OutputTexture[id.xy] = float4(inputTexture.zw, 0, 0);

}

#endif

}

JumpFlooding.cs

C#脚本没什么特殊的地方了,只要准备好Render Texture和各类参数,传递给Compute Shader就好了。为了方便可视化,这里使用了MonoBehaviour的Coroutine,需要点击play之后在点击脚本里的"Calculate SDF"。这里保存的是记录了对应的像素点的UV的贴图,这样可以用jpg或者png来保存,如果要保存记录了有向距离的灰度贴图,就需要保存为float类型的exr格式了。

using System.Collections;

using UnityEngine;

using UnityEditor;

public class JumpFlooding : MonoBehaviour

{

enum Channels

{

R, G, B, A

}

public Texture inputTexture;

public ComputeShader computeShader;

public float updateTime;

public MeshRenderer meshRenderer;

public bool useGrayScale = false;

private RenderTexture[] renderTextures;

private static void EnsureArray<T>(ref T[] array, int size, T initialValue = default(T))

{

if (array == null || array.Length != size)

{

array = new T[size];

for (int i = 0; i != size; i++)

array[i] = initialValue;

}

}

private static void EnsureRenderTexture(ref RenderTexture rt, RenderTextureDescriptor descriptor, string RTName)

{

if (rt != null && (rt.width != descriptor.width || rt.height != descriptor.height))

{

RenderTexture.ReleaseTemporary(rt);

rt = null;

}

if (rt == null)

{

RenderTextureDescriptor desc = descriptor;

desc.depthBufferBits = 0;

desc.msaaSamples = 1;

rt = RenderTexture.GetTemporary(desc);

rt.name = RTName;

if (!rt.IsCreated()) rt.Create();

}

}

public static void EnsureRT(ref RenderTexture[] rts, RenderTextureDescriptor descriptor)

{

EnsureArray(ref rts, 2);

EnsureRenderTexture(ref rts[0], descriptor, "Froxel Tex One");

EnsureRenderTexture(ref rts[1], descriptor, "Froxel Tex Two");

}

public void Calculate()

{

StartCoroutine(CalculateCoroutine());

}

public void CopyUV(Texture texture, RenderTexture renderTexture, uint channel)

{

int kernel = computeShader.FindKernel("CopyUVMain");

computeShader.GetKernelThreadGroupSizes(kernel, out uint x, out uint y, out uint z);

Vector3Int dispatchCounts = new Vector3Int(Mathf.CeilToInt((float)inputTexture.width / x),

Mathf.CeilToInt((float)inputTexture.height / y),

1);

computeShader.SetTexture(kernel, "_InputTexture", texture);

computeShader.SetTexture(kernel, "_OutputTexture", renderTexture);

computeShader.SetVector("_TextureSize", new Vector4(texture.width, texture.height, 1.0f / texture.width, 1.0f / texture.height));

computeShader.SetFloat("_Channel", (float)channel);

computeShader.Dispatch(kernel, dispatchCounts.x, dispatchCounts.y, dispatchCounts.z);

}

public void JFA(RenderTexture one, RenderTexture two, Vector2Int step, bool reverse)

{

int kernel = computeShader.FindKernel("JFAMain");

computeShader.GetKernelThreadGroupSizes(kernel, out uint x, out uint y, out uint z);

Vector3Int dispatchCounts = new Vector3Int(Mathf.CeilToInt((float)inputTexture.width / x),

Mathf.CeilToInt((float)inputTexture.height / y),

1);

if (reverse)

{

computeShader.SetTexture(kernel, "_InputTexture", two);

computeShader.SetTexture(kernel, "_OutputTexture", one);

}

else

{

computeShader.SetTexture(kernel, "_InputTexture", one);

computeShader.SetTexture(kernel, "_OutputTexture", two);

}

computeShader.SetVector("_TextureSize", new Vector4(inputTexture.width, inputTexture.height, 1.0f / inputTexture.width, 1.0f / inputTexture.height));

computeShader.SetVector("_Step", (Vector2)step);

computeShader.Dispatch(kernel, dispatchCounts.x, dispatchCounts.y, dispatchCounts.z);

}

public void Compose(RenderTexture one, RenderTexture two, Texture texture, uint channel, bool reverse)

{

int kernel = computeShader.FindKernel("ComposeMain");

computeShader.GetKernelThreadGroupSizes(kernel, out uint x, out uint y, out uint z);

Vector3Int dispatchCounts = new Vector3Int(Mathf.CeilToInt((float)inputTexture.width / x),

Mathf.CeilToInt((float)inputTexture.height / y),

1);

if (reverse)

{

computeShader.SetTexture(kernel, "_InputTexture", two);

computeShader.SetTexture(kernel, "_OutputTexture", one);

}

else

{

computeShader.SetTexture(kernel, "_InputTexture", one);

computeShader.SetTexture(kernel, "_OutputTexture", two);

}

computeShader.SetTexture(kernel, "_OriginalTexture", texture);

computeShader.SetFloat("_Channel", (float)channel);

#if UNITY_2020_2_OR_NEWER

if(useGrayScale)

{

computeShader.EnableKeyword("_USE_GRAYSCALE");

}

else

{

computeShader.DisableKeyword("_USE_GRAYSCALE");

}

#endif

computeShader.Dispatch(kernel, dispatchCounts.x, dispatchCounts.y, dispatchCounts.z);

}

public void Visualize(RenderTexture one, RenderTexture two, bool reverse)

{

MaterialPropertyBlock mpb = new MaterialPropertyBlock();

mpb.SetTexture("_MainTex", reverse ? two : one);

meshRenderer.SetPropertyBlock(mpb);

}

static public void SaveToTexture(string name, RenderTexture renderTexture, bool alphaIsTransparency)

{

RenderTexture currentRT = RenderTexture.active;

RenderTexture.active = renderTexture;

Texture2D texture2D = new Texture2D(renderTexture.width, renderTexture.height, TextureFormat.RGBAFloat, false);

texture2D.ReadPixels(new Rect(0, 0, renderTexture.width, renderTexture.height), 0, 0);

RenderTexture.active = currentRT;

System.IO.Directory.CreateDirectory("Assets/JumpFlooding/");

byte[] bytes = texture2D.EncodeToEXR();

string path = "Assets/JumpFlooding/" + name + ".exr";

System.IO.File.WriteAllBytes(path, bytes);

TextureImporter importer = (TextureImporter)AssetImporter.GetAtPath(path);

if (importer != null)

{

importer.alphaIsTransparency = alphaIsTransparency;

importer.sRGBTexture = false;

importer.mipmapEnabled = false;

AssetDatabase.ImportAsset(path);

}

Debug.Log("Saved to " + path);

AssetDatabase.Refresh();

}

IEnumerator CalculateCoroutine()

{

RenderTextureDescriptor desc = new RenderTextureDescriptor

{

width = inputTexture.width,

height = inputTexture.height,

volumeDepth = 1,

msaaSamples = 1,

graphicsFormat = UnityEngine.Experimental.Rendering.GraphicsFormat.R16G16B16A16_SFloat,

enableRandomWrite = true,

dimension = UnityEngine.Rendering.TextureDimension.Tex2D,

sRGB = false

};

EnsureRT(ref renderTextures, desc);

RenderTexture rtOne = renderTextures[0];

RenderTexture rtTwo = renderTextures[1];

CopyUV(inputTexture, rtOne, (uint)Channels.A);

yield return new WaitForSeconds(updateTime);

Shader.DisableKeyword("RENDERTEXTURE_UPSIDE_DOWN");

Vector2Int step = new Vector2Int((inputTexture.width + 1) >> 1, (inputTexture.height + 1) >> 1);

bool reverse = false;

do

{

Debug.Log(step);

JFA(rtOne, rtTwo, step, reverse);

reverse = !reverse;

Visualize(rtOne, rtTwo, reverse);

step = new Vector2Int((step.x + 1) >> 1, (step.y + 1) >> 1);

yield return new WaitForSeconds(updateTime);

} while (step.x > 1 || step.y > 1);

Debug.Log(new Vector2Int(1, 1));

JFA(rtOne, rtTwo, new Vector2Int(1, 1), reverse);

reverse = !reverse;

Visualize(rtOne, rtTwo, reverse);

yield return new WaitForSeconds(updateTime);

Debug.Log(new Vector2Int(1, 1));

JFA(rtOne, rtTwo, new Vector2Int(1, 1), reverse);

reverse = !reverse;

Visualize(rtOne, rtTwo, reverse);

yield return new WaitForSeconds(updateTime);

Compose(rtOne, rtTwo, inputTexture, (uint)Channels.A, reverse);

reverse = !reverse;

Shader.EnableKeyword("RENDERTEXTURE_UPSIDE_DOWN");

Visualize(rtOne, rtTwo, reverse);

SaveToTexture("WhatIsThis", reverse ? rtTwo : rtOne, false);

}

}

[CustomEditor(typeof(JumpFlooding))]

public class JumpFloodingEditor : Editor

{

private JumpFlooding jumpFlooding;

private void OnEnable()

{

jumpFlooding = (JumpFlooding)target;

}

public override void OnInspectorGUI()

{

base.OnInspectorGUI();

using (new EditorGUI.DisabledGroupScope(!Application.isPlaying))

{

if (GUILayout.Button("Calculate SDF", GUILayout.Height(30)))

{

jumpFlooding.Calculate();

}

}

}

}

JFAVisualize.shader

这个shader应该用在宽高比2:1的Mesh上,这样左半部分是记录了最近像素的uv的贴图,有伴部分是记录了带方向的距离的灰度贴图或者其描边的可视化。需要注意采样的方式和使用像素点中心来计算距离。

Shader "Unlit/JFAVisualize"

{

Properties

{

_MainTex ("Texture", 2D) = "white" {}

_Distance ("Distance", float) = 10

}

HLSLINCLUDE

#include "Packages/com.unity.render-pipelines.core/ShaderLibrary/Common.hlsl"

#include "Packages/com.unity.render-pipelines.universal/ShaderLibrary/Core.hlsl"

#pragma multi_compile _ RENDERTEXTURE_UPSIDE_DOWN

Texture2D _MainTex;

float4 _MainTex_TexelSize;

SamplerState sampler_PointClamp;

float _Distance;

struct Attributes

{

float4 positionOS : POSITION;

float2 texcoord : TEXCOORD0;

};

struct Varyings

{

float4 positionCS : SV_POSITION;

float2 uv : TEXCOORD0;

};

Varyings Vert(Attributes input)

{

Varyings output = (Varyings)0;

VertexPositionInputs vertexInput = GetVertexPositionInputs(input.positionOS.xyz);

output.positionCS = vertexInput.positionCS;

output.uv = input.texcoord;

return output;

}

float4 Frag(Varyings input) : SV_TARGET

{

float2 upsideDown = input.uv;

#if RENDERTEXTURE_UPSIDE_DOWN

upsideDown = float2(input.uv.x, 1 - input.uv.y);

#endif

float2 uvOne = float2(upsideDown.x * 2, upsideDown.y);

float2 uvTwo = float2(upsideDown.x * 2 - 1, upsideDown.y);

float4 returnColor = 0;

if (input.uv.x >= 0.5)

{

float4 texColor = _MainTex.SampleLevel(sampler_PointClamp, uvTwo, 0);

#if RENDERTEXTURE_UPSIDE_DOWN

float2 realTexcoord = float2(input.uv.x * 2 - 1, input.uv.y);

realTexcoord = floor(realTexcoord* _MainTex_TexelSize.zw) + 0.5;

float distance = length(texColor.xy * _MainTex_TexelSize.zw - realTexcoord);

#else

float2 realTexcoord = uvTwo;

realTexcoord = floor(realTexcoord * _MainTex_TexelSize.zw) + 0.5;

float distance = length(texColor.xy * _MainTex_TexelSize.zw - realTexcoord);

#endif

float grayScale = smoothstep(_Distance, 0, distance);

returnColor = float4(grayScale, grayScale, grayScale, 1);

}

else

{

returnColor = _MainTex.SampleLevel(sampler_PointClamp, uvOne, 0);

}

return returnColor;

}

ENDHLSL

SubShader

{

Tags { "RenderType"="Opaque" }

LOD 100

Pass

{

Name "JFA Visualize Pass"

Tags{"LightMode" = "UniversalForward"}

Cull Back

ZTest LEqual

ZWrite On

HLSLPROGRAM

#pragma vertex Vert

#pragma fragment Frag

ENDHLSL

}

}

}